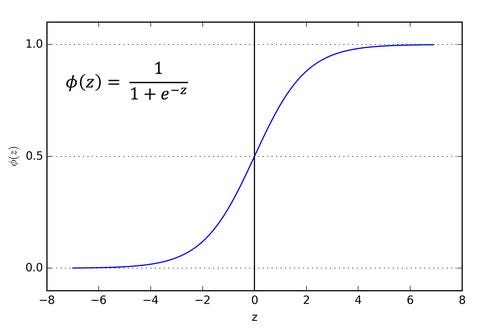

When the activation function for a neuron is a sigmoid function it is a guarantee that the output of this unit will always be between 0 and 1. A weighted sum of inputs is passed through an activation function and this output serves as an input to the next layer. Just to review what is an activation function, the figure below shows the role of an activation function in one layer of a neural network. _(1).jpg)

The sigmoid function is used as an activation function in neural networks.

Sigmoid As An Activation Function In Neural Networks Same goes for any number between -∞ and +∞. Hence, if the input to the function is either a very large negative number or a very large positive number, the output is always between 0 and 1. The sigmoid function is also called a squashing function as its domain is the set of all real numbers, and its range is (0, 1). For values greater than 10, the function’s values are almost one. For values less than -10, the function’s value is almost zero. Numerically, it is enough to compute this function’s value over a small range of numbers, e.g.The function is differentiable everywhere in its domain.The function is monotonically increasing.Some important properties are also shown. Graph of the sigmoid function and its derivative.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed